Calibration of RGB-D cameras and hand-eye system development

The modality depth was added to the ATOM calibration framework as a new modality in the first half of this year. Larcc was already calirated in a system that included a depth camera. Nevertheless, to prove that our extension of ATOM works, a series of experiments are being developed.

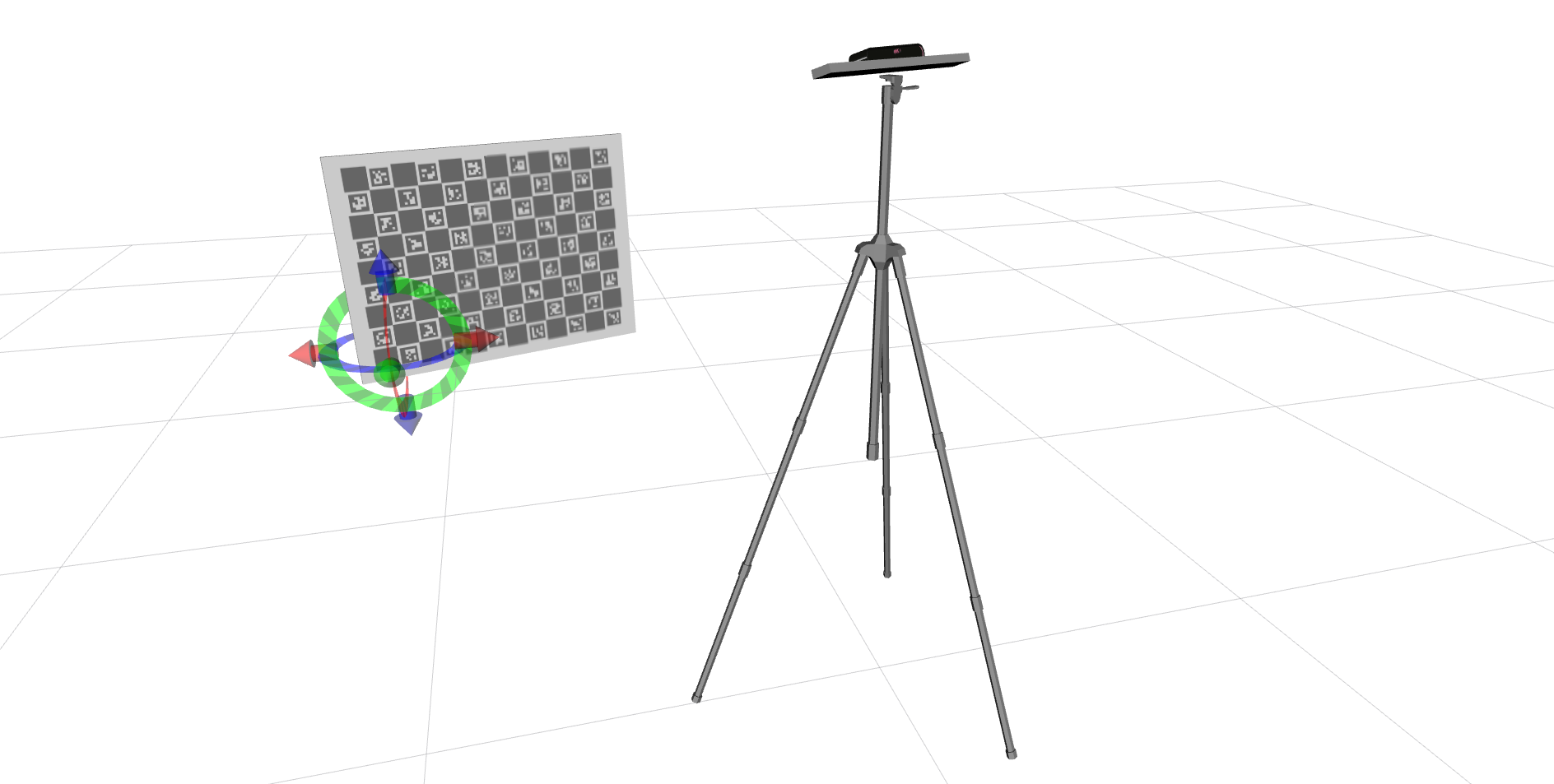

First we developed a simple experiment to calibrate an RGB-D camera (RGB and depth sensors), as seen in the following image.

This experiment first went through a simulated phase where it was proven that the calibration framework was working. This calibration was performed with different initial estimate errors to ensure that the tridimensional models attached to the RGB and depth coordinate frames always overlapped. Then, we performed the same experiment with real data with an Orbecc Astra Pro but the 3D models always had a small displacement that might be caused by the registration of the depth and RGB coordinated frames done automatically by the driver.

We are now working on developing the same experiment with an Asus Xtion to understand if the problem was the camera or if the methodology needs to be adapted.

In paralel to this, I am also working on assembling the hand-eye system in real life. We already had a fully functional simulation with a moving robot, to which I also added a camera to the robotic arm. With real data, we still hadn't connected the collaborative robot to ROS to enable communication and motion planning. So I adapted the larcc package to launch the necessary nodes to comunicate with the robot and know its position at all times, as shown in the following video.

After this, I added a camera to the robotic arm, which is also connected with larcc and moves attached to the robot.I have also collected data and adapted the calibration package to do a calibration on this system. The following video shows a portion of the collected data.

The ongoing work is the collection of a dataset for calibration and the calibration itself of the hand-eye and fixed structure.

This work should be documented in the form of a paper.

.gif)